|

| def | __init__ (self) |

| |

| def | open (self, url, method='get', headers=None, timeout=100, allow_redirects=True, proxies=None, auth=None, data=None, log=None, allowed_content_types=None, max_resource_size=None, max_redirects=CONSTS.MAX_HTTP_REDIRECTS_LIMIT, filters=None, executable_path=None, depth=None, macro=None) |

| |

| def | should_have_meta_res (self) |

| |

| def | getDomainNameFromURL (self, url, default='') |

| |

|

| def | init (dbWrapper=None, siteId=None) |

| |

| def | get_fetcher (typ, dbWrapper=None, siteId=None) |

| |

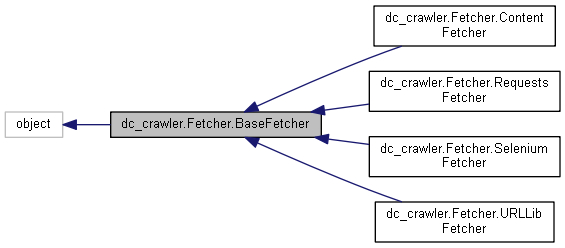

Definition at line 50 of file Fetcher.py.

◆ __init__()

| def dc_crawler.Fetcher.BaseFetcher.__init__ |

( |

|

self | ) |

|

Definition at line 65 of file Fetcher.py.

66 self.connectionTimeout = self.CONNECTION_TIMEOUT

def __init__(self)

constructor

◆ get_fetcher()

| def dc_crawler.Fetcher.BaseFetcher.get_fetcher |

( |

|

typ, |

|

|

|

dbWrapper = None, |

|

|

|

siteId = None |

|

) |

| |

|

static |

Definition at line 121 of file Fetcher.py.

121 def get_fetcher(typ, dbWrapper=None, siteId=None):

122 if not BaseFetcher.fetchers:

123 BaseFetcher.init(dbWrapper, siteId)

124 if typ

in BaseFetcher.fetchers:

125 return BaseFetcher.fetchers[typ]

127 raise BaseException(

"unsupported fetch type:%s" % (typ,))

◆ getDomainNameFromURL()

| def dc_crawler.Fetcher.BaseFetcher.getDomainNameFromURL |

( |

|

self, |

|

|

|

url, |

|

|

|

default = '' |

|

) |

| |

Definition at line 142 of file Fetcher.py.

142 def getDomainNameFromURL(self, url, default=''):

145 urlParts = urlsplit(url)

146 if len(urlParts) > 1:

◆ init()

| def dc_crawler.Fetcher.BaseFetcher.init |

( |

|

dbWrapper = None, |

|

|

|

siteId = None |

|

) |

| |

|

static |

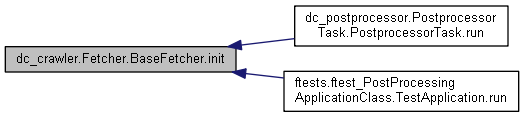

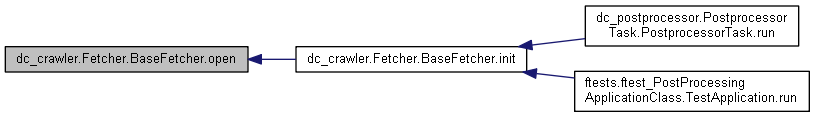

Definition at line 71 of file Fetcher.py.

71 def init(dbWrapper=None, siteId=None):

73 BaseFetcher.prohibited_conten_types = [

"audio/mpeg",

"application/pdf"]

75 BaseFetcher.fetchers = {

76 BaseFetcher.TYP_NORMAL : RequestsFetcher(dbWrapper, siteId),

77 BaseFetcher.TYP_DYNAMIC: SeleniumFetcher(),

78 BaseFetcher.TYP_URLLIB: URLLibFetcher(),

79 BaseFetcher.TYP_CONTENT: ContentFetcher()

◆ open()

| def dc_crawler.Fetcher.BaseFetcher.open |

( |

|

self, |

|

|

|

url, |

|

|

|

method = 'get', |

|

|

|

headers = None, |

|

|

|

timeout = 100, |

|

|

|

allow_redirects = True, |

|

|

|

proxies = None, |

|

|

|

auth = None, |

|

|

|

data = None, |

|

|

|

log = None, |

|

|

|

allowed_content_types = None, |

|

|

|

max_resource_size = None, |

|

|

|

max_redirects = CONSTS.MAX_HTTP_REDIRECTS_LIMIT, |

|

|

|

filters = None, |

|

|

|

executable_path = None, |

|

|

|

depth = None, |

|

|

|

macro = None |

|

) |

| |

Definition at line 109 of file Fetcher.py.

112 del url, method, headers, timeout, allow_redirects, proxies, auth, data, log, allowed_content_types, \

113 max_resource_size, max_redirects, filters, executable_path, depth, macro

◆ should_have_meta_res()

| def dc_crawler.Fetcher.BaseFetcher.should_have_meta_res |

( |

|

self | ) |

|

Definition at line 133 of file Fetcher.py.

133 def should_have_meta_res(self):

◆ CONNECTION_TIMEOUT

| float dc_crawler.Fetcher.BaseFetcher.CONNECTION_TIMEOUT = 1.0 |

|

static |

◆ connectionTimeout

| dc_crawler.Fetcher.BaseFetcher.connectionTimeout |

◆ fetchers

| dc_crawler.Fetcher.BaseFetcher.fetchers = None |

|

static |

◆ logger

| dc_crawler.Fetcher.BaseFetcher.logger |

◆ TYP_AUTO

| int dc_crawler.Fetcher.BaseFetcher.TYP_AUTO = 7 |

|

static |

◆ TYP_CONTENT

| int dc_crawler.Fetcher.BaseFetcher.TYP_CONTENT = 6 |

|

static |

◆ TYP_DYNAMIC

| int dc_crawler.Fetcher.BaseFetcher.TYP_DYNAMIC = 2 |

|

static |

◆ TYP_NORMAL

| int dc_crawler.Fetcher.BaseFetcher.TYP_NORMAL = 1 |

|

static |

◆ TYP_URLLIB

| int dc_crawler.Fetcher.BaseFetcher.TYP_URLLIB = 5 |

|

static |

The documentation for this class was generated from the following file: